Introduction to RealityKit

Understanding RealityKit is fundamental for building AR apps for Apple devices. Here's a brief overview of how it's structured.

RealityKit

Overview

If you want to create an AR application, you need rendering, physics, and animation. RealityKit does all of this for you and is a super powerful tool for basically allowing you to construct scenes in the real world, allowing your digital content to interact with the real world. It’s a Swift framework that focuses on physically-based rendering and accurate object simulation within a real life environment.

You can think of it as part of ARKit, except - as the name implies - RealityKit is about how your app interacts with the physical non-digital world.

Here is a broad overview of all the different parts:

- ARView

- Scene

- Anchor

- Entities

– Components – Materials

ARView

Lets you:

- Define how shadows work

- Add graininess like a cinematic film, so that your virtual object looks more like it should belong there

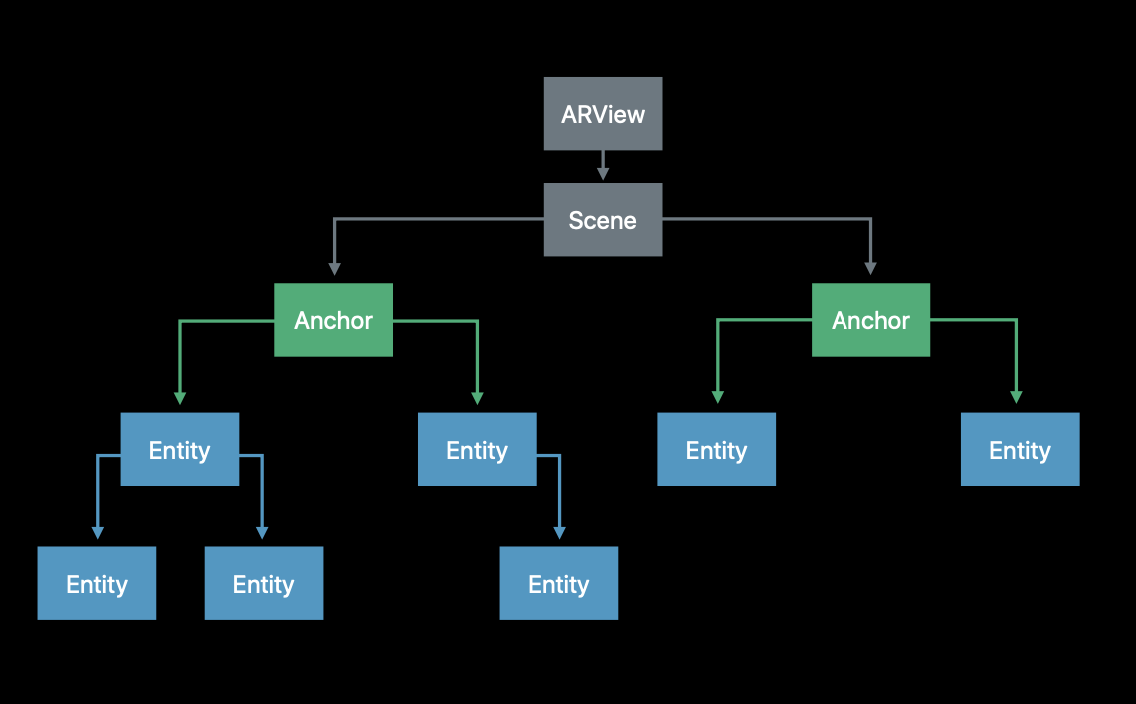

Anchors

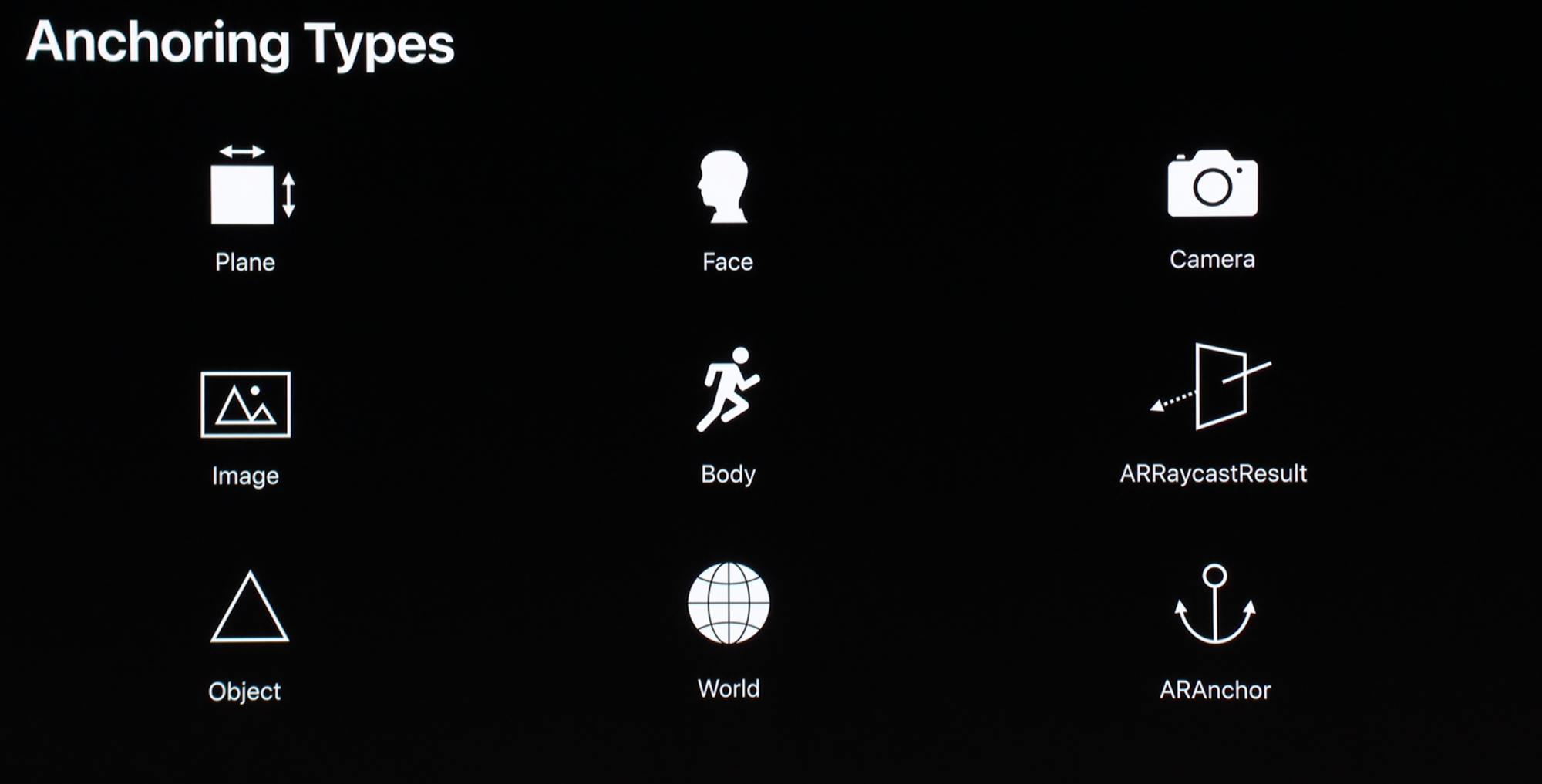

Think of anchors as points that correspond to something in the real world. AR is about attaching digital content - for example, a virtual spaceship - inside the real world. So it makes sense that we need to find something to attach it to!

RealityKit is really cool in that an anchor can be a lot of different things. For example, you can use a photo printed onto a piece of physical paper, like a QR code for example. Or you can use a person’s face, or their body, or even a flat plane (surface) like a tabletop.

An anchor itself is just a point. It physically doesn’t look like anything in particular, just like we use an address on a map to give us the coordinates of a building but it’s not the house itself. That’s what an entity is for.

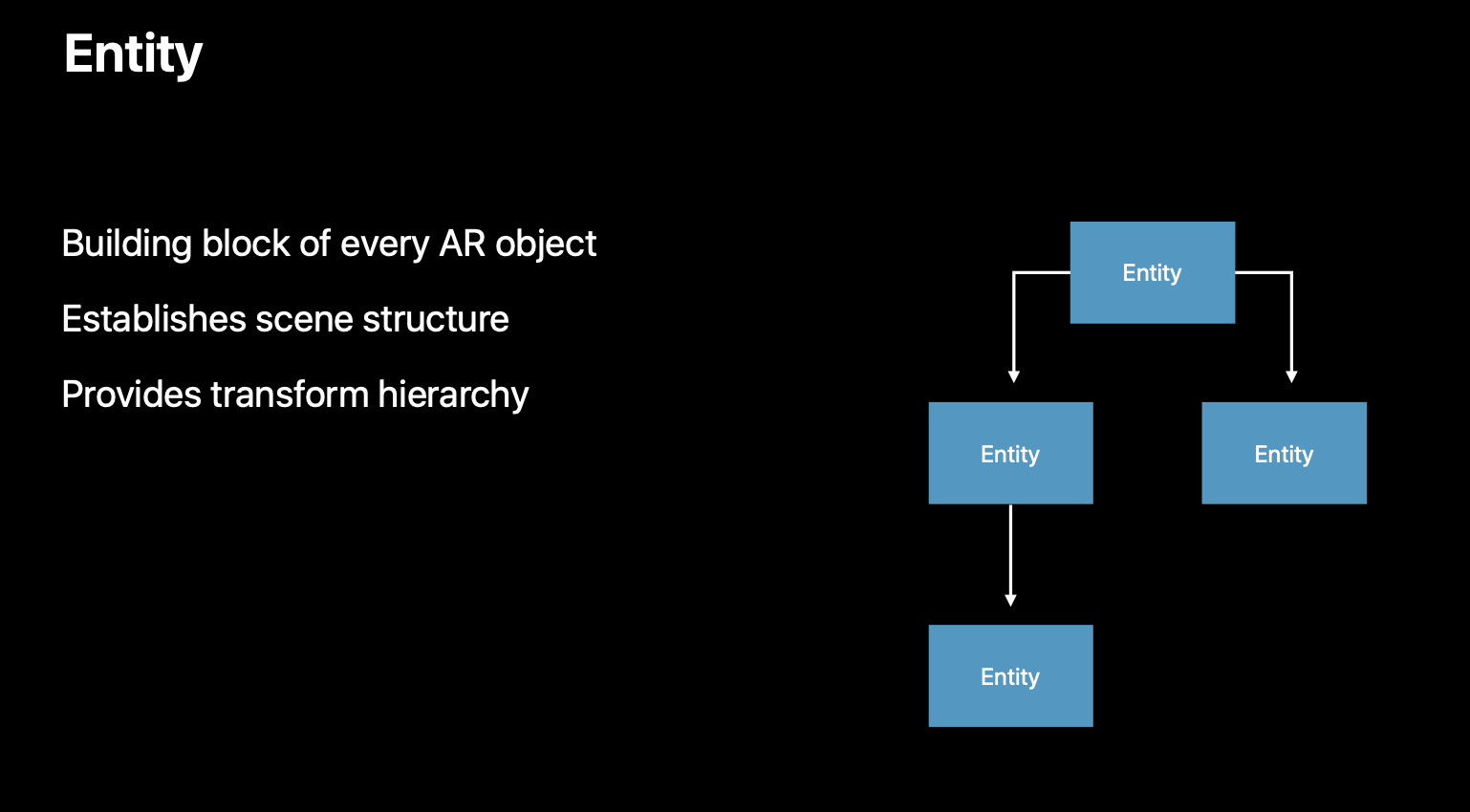

Entities

If an anchor is a location, then the entity is the house itself. A simple example of an entity would be the spaceship we talked about earlier. It’s an entity that we want to put into our scene, that will make up the things that we actually see in AR. If anchors are locations within the world, think of an entity as “a thing” within the world itself, that can interact and be interacted with.

In other words, entities can be attached to anchors. Anchors are attached to the world.

There are many different types of entities that RealityKit comes pre-configured with for easy use.

We can use an anchor itself as an entity, called an AnchorEntity.

Or we can attach an actual model that we can see. And, you guessed it, it’s called a ModelEntity. A ModelEntity is basically a 3D object placed in the world.

The ModelEntity itself is composed of two things:

- A Mesh Resource, which defines its shape…

- …and Materials, which define how it appears. Like colors, roughness, how metallic it appears, those sorts of things. If you’ve ever worked with other 3D software before, this is a kind of hybrid combination of a shader and a UV Map.

Because Apple likes to make things easier for us, they give us some materials to use for free. For example, SimpleMaterial.

Importantly, underlying realistic appearing objects is the fact that they (conceptually) interact with light from the real world, and appear like they belong there. So for SimpleMaterial, when the room gets dark, any entity covered in SimpleMaterial similarly looks darker to match.

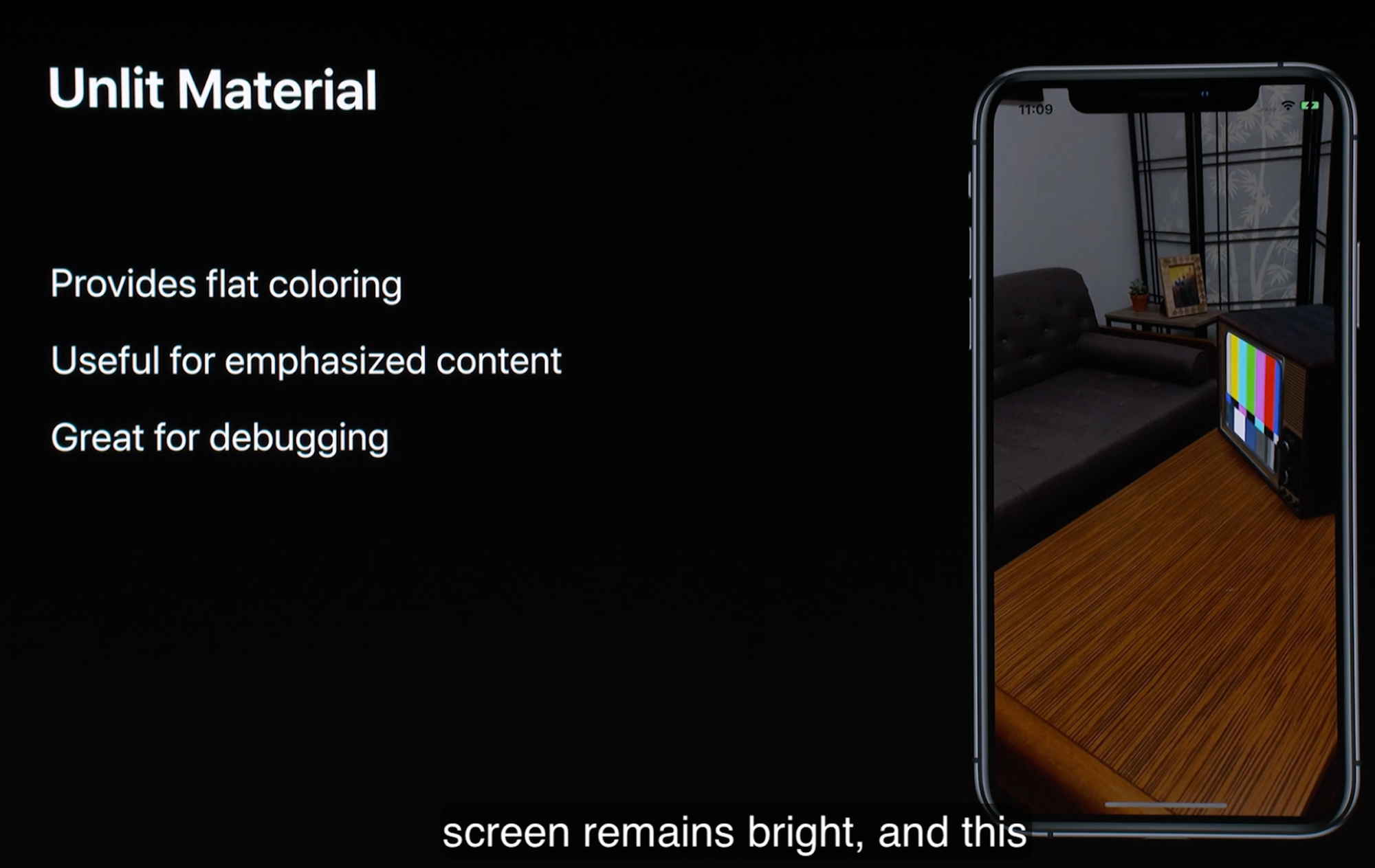

But, if you want your object to shine like a neon globe without getting affected by light sources - a real example in the world would be a TV screen, which burns your eyes when you turn the room light off and leave it glowing on during your Netflix binge - then you can simply use Unlit Material for that.

Occlusion Material is really interesting. In technical terms, it allows for video passthrough. In practical terms, what that means is that you can apply a material to a real world object, so that if one of your digital objects goes behind it, then the real world will block you from being able to see it. The best way to illustrate this is the example below, where the table itself has an Occlusion Material applied to it.

Components

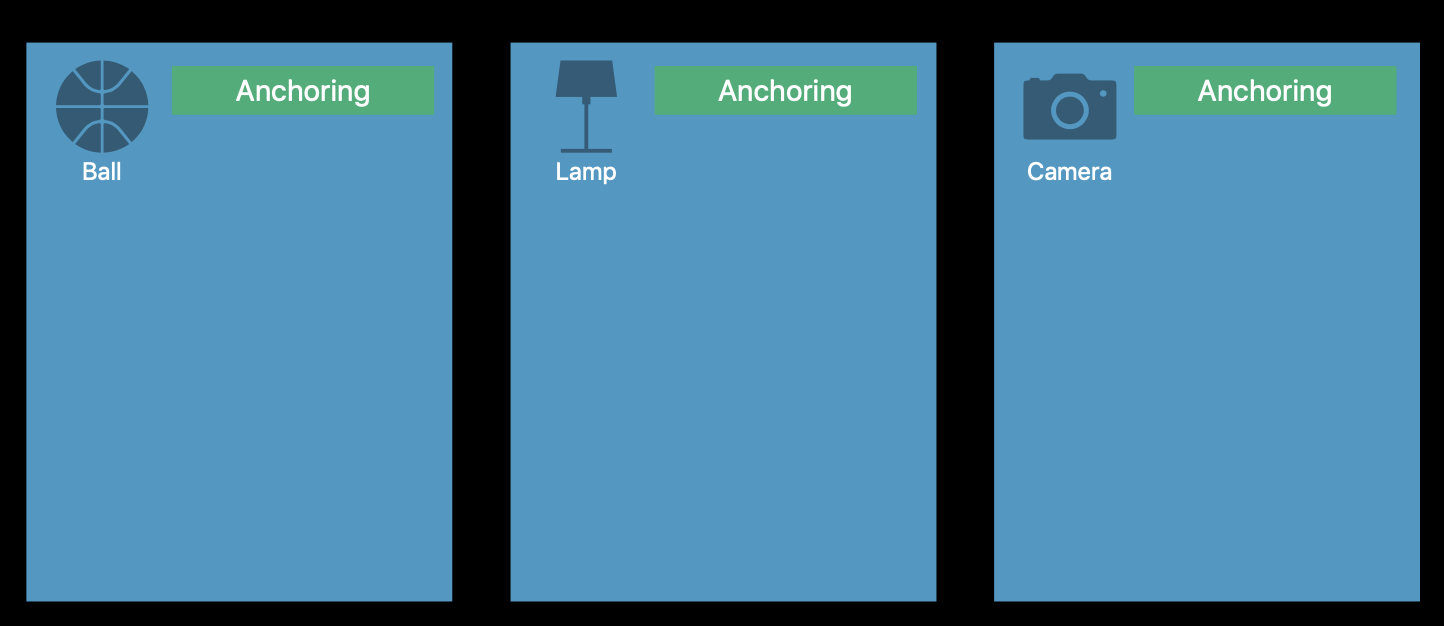

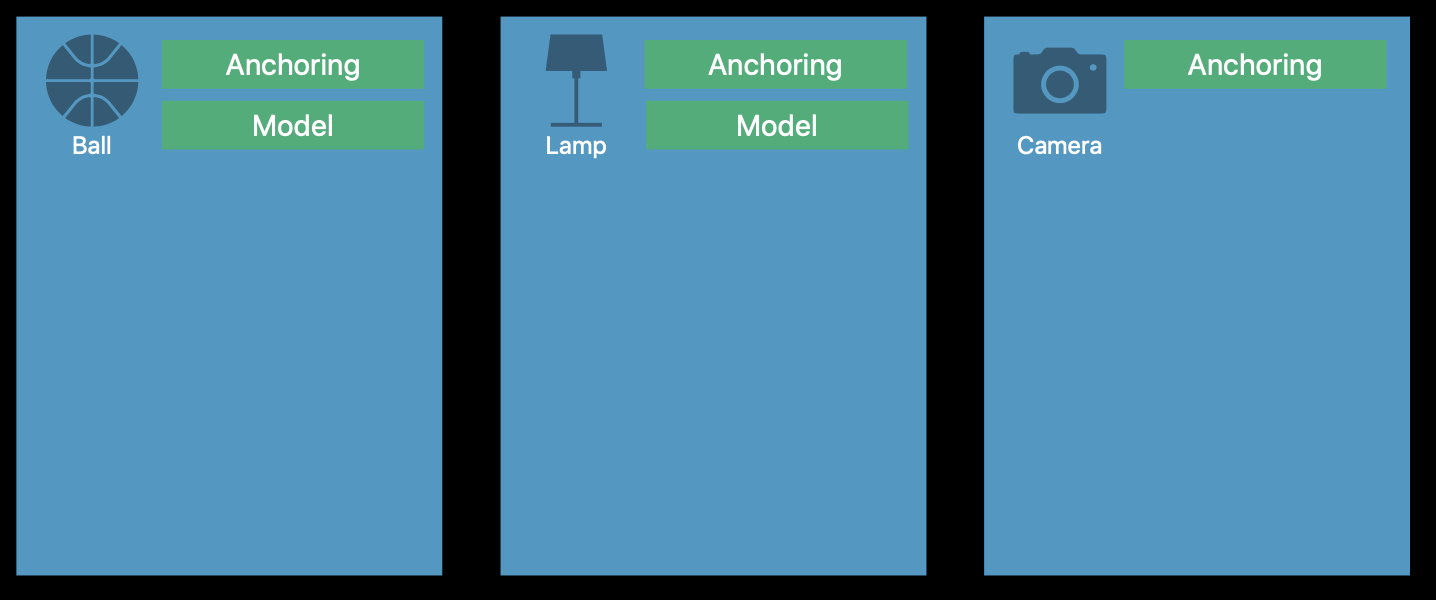

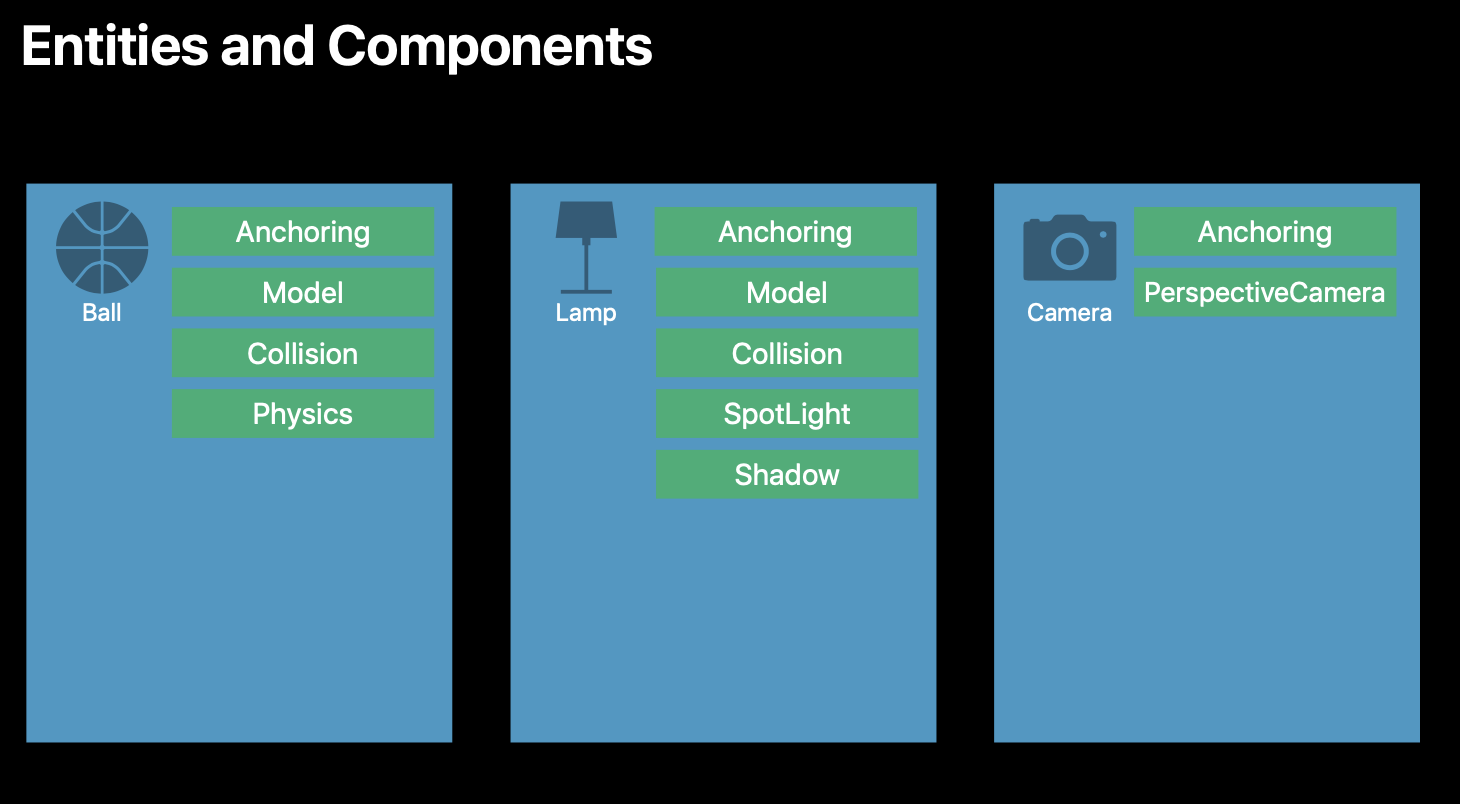

So having an entity is great, but your next question then becomes…what can my entity actually do? And this is where Components come in. Essentially, they define what your entities can do.

Some components are really needed throughout all kinds of models. For example, all entities need to exist in the scene. So they will all have an Anchoring component to them.

But then, for example, a Camera doesn’t need to have a physical model to represent it in your AR world. But a basketball and a lamp would.

And so components define the properties that are needed between each entity in your AR world. This is actually really great because it helps to optimise performance - if there’s components that are used by different entities, then this is highly optimised by ARKit.

Animations

Can do skeletal and transform animations and apply them to objects. You can also do this in Reality Composer.